P.H.R.E.D.

Project Hub for Retrieval, Execution, & Delivery

A complex mobile app, two people, and a production MVP that would have been nearly impossible to coordinate without the right structure. This is the operating methodology that made it workable: the stack, the source-of-truth system, the workflow patterns, and an orchestration agent that keeps AI-assisted work connected instead of scattered.

Shared as a public case study in practical AI orchestration, not AI theater.

Applied case study

Context

The Need

The project that motivated this was complex. Confidential product, small team, limited budget, aggressive timeline.

The problem with AI-assisted work isn’t output. It’s that the output goes everywhere. Product decisions in one doc. Design exploration in another. Tasks created without context. AI conversations that go nowhere. Nothing connects.

The answer was to build the operating system before accelerating the work. Project OS gives AI tools enough structure to actually help: planning, tasks, handoffs, QA, documentation. And it keeps humans in control of scope, privacy, and decisions that can’t be undone. The product details stay confidential. The methodology doesn’t.

Overview

The system

This is the stack. These are the tools and patterns in active use.

Google Workspace

Working docs, meeting notes, brainstorming, shared context

Asana

Roadmap, Gantt, tasks, dependencies, milestones, weekly status

Figma

Design source of truth, components, tokens, platform guardrails, handoff

GitHub

Versioned methodology docs, implementation notes, issues, commits, and proof of work

Claude Code

Agent-assisted planning, repo work, architecture review, Asana automation

Claude Design

Design exploration, creative iteration, concept review, visual direction

Codex

Setup support, code assistance, implementation review, structured file edits

Slack

Planned team communication and agent notification surface

MCP

Controlled bridge between AI agents and external tools

Project OS

Source-of-truth patterns, rules, reusable prompts, approval gates, and context management conventions

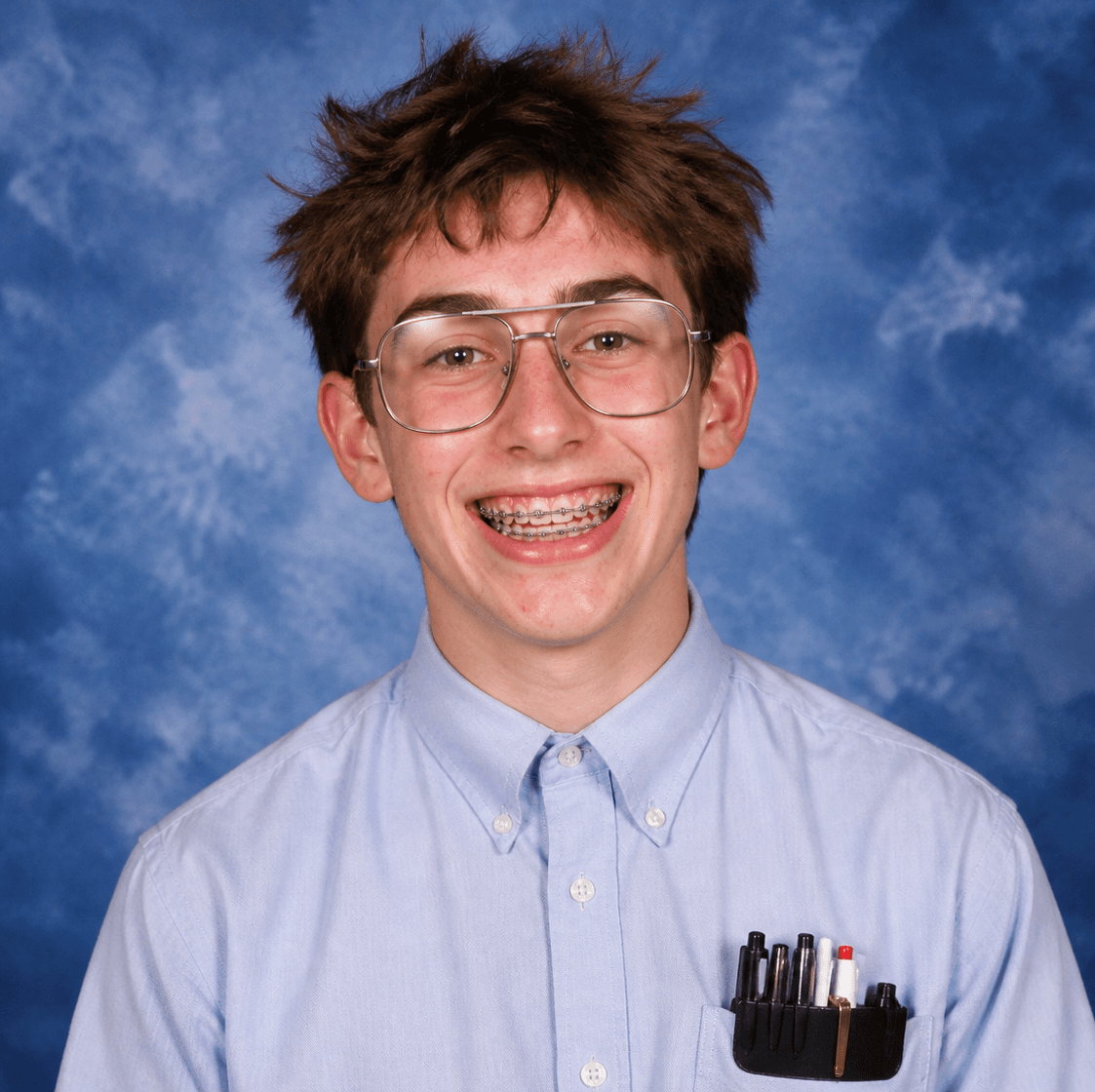

Phred

Orchestration-agent pattern: reads approved context, proposes actions, flags risks, and prepares handoffs for human review

System map

How the operating layer works

Phred doesn’t replace human judgment. He makes sure fewer things fall through the cracks before the work reaches you.

Information architecture

What counts as truth?

Not every document carries the same weight. The system helps AI-assisted workflows know the difference between a committed decision and a half-finished note.

This is what stops an AI tool from treating a brainstorm like a committed requirement.

Workflow

Design to code

Explore & design

Review & hand off

Build & ship

Design exploration, approved design, engineering, and QA stay connected. The confidential specifics don’t need to be here for the workflow to make sense.

Orchestration

Meet Phred

Phred has a job. Read the right context, identify what changed, propose the next action, and flag what needs a human. No broad authority. No autonomous decisions. A well-defined role for a well-defined tool.

What Phred can do

- +Summarize current project state from approved sources

- +Read selected methodology and project-context docs

- +Draft proposed tasks for review

- +Flag missing source-of-truth links

- +Identify blockers and stale tasks

- +Prepare weekly status drafts

- +Extract decisions and open questions from notes

- +Prepare design-to-code handoff summaries

- +Convert QA findings into proposed tasks

What Phred cannot do

- ×Approve MVP or roadmap scope changes

- ×Change permissions or billing

- ×Access secrets

- ×Send external communications

- ×Move future-phase work into current-phase scope

- ×Approve privacy or security decisions

- ×Merge production code

- ×Treat brainstorms as requirements

Integration map

Touchpoints

Planning

- Project OS

- Google Workspace

- Asana

Project OS defines what's approved. Everything else (docs, tasks, meetings) connects to that foundation.

Design

- Figma

- AI Design Exploration

- Figma Dev Mode

One place for visual decisions. AI speeds up exploration. A structured handoff makes sure engineering gets what it needs.

Engineering

- GitHub

- Claude Code

- Codex

- React Native / Expo

The repo holds methodology docs, notes, and proof of work. AI coding tools assist with implementation; humans review before anything ships.

Automation

- MCP

- Asana

- Phred PM's all Asana/Meeting tasks

- Weekly status workflow

Phred routes context between systems. MCP keeps the integrations controlled. What surfaces is what needs a decision.

Quality Control

- QA planning across mobile platforms

- Event simulation mode

- UAT

QA plans run against approved requirements. Simulation and UAT run before production. The confidential specifics don't need to be here.

Controls

Why the guardrails matter

AI gets more useful when the rules are explicit. Project OS tells agents what’s approved, what’s open, what’s off-limits, and when to stop and ask.

- ✓Current-phase versus future-phase separation

- ✓Approval gates before scope changes

- ✓Privacy and permissions rules

- ✓Platform-specific implementation guardrails

- ✓Offline, stale-state, or failure-mode requirements where relevant

- ✓Simulation and UAT requirements

- ✓Design system discipline

- ✓No secrets in docs or prompts

- ✓Human approval required for high-risk changes

What’s missing

Future methodology extensions

RAG / Retrieval Layer

- Better context control: less raw content dumped into models, more structured retrieval

- A proper retrieval layer for approved docs instead of injecting full markdown into every prompt

Specialized Sub-agents

- Repetitive process support

- QA review

- Requirements auditing

- Platform-specific guardrail review

Takeaways

What this proves

Systems thinking

Built the operating model before the product. Rework is expensive. Context loss is more so.

AI orchestration

Connected AI to real product, design, engineering, and QA workflows. Human approval stayed in the loop where it mattered.

Creative operations

Converted ambiguity into structure, tasks, handoffs, and decision paths.

Technical fluency

The tool stack is real: Claude Code, Codex, MCP, GitHub, Figma, Asana, React Native / Expo. Each one chosen for a reason.

Product judgment

Kept humans in control of privacy, permissions, scope, and anything that can't be undone.

Public methodology note

Reusable by design

This methodology is reusable. The patterns (source-of-truth structure, approval gates, agent boundaries, decision logs) can apply to any team that needs AI-assisted work to stay connected and under control.

What’s not here: the specific product, company, code, designs, or business details. Those are confidential. Everything else is fair game.

This is the kind of AI work I’m interested in.

Not prompts for the sake of prompts. Not AI theater. Practical systems that help small teams move faster without losing control of the product, the roadmap, or the standards.

Built by Randall Fransen. Applied during work on a confidential mobile app project. Product specifics stay confidential.